Are you ready to learn everything about big data? Every day, people from different parts of the world use social media platforms, mobile applications and websites for various purposes. If you think that you use these platforms for browsing purposes only, you are wrong. While navigating, a lot of data is sent to the databases of the systems. Transactions made, time spent, social media likes, comments made, watched… For example, according to a statistic, more than 500 terabytes of new data are recorded in Facebook’s databases every day.

What happens as a result of this constantly generated data when we open an app, search on Google, or travel somewhere with our mobile devices? Huge collections of valuable information emerge that companies and organizations need to manage, store, visualize and analyze. Traditional data tools are not equipped to handle such complexity and volume of data, a set of specialized big data software designed to manage this load.

Before we get into big data, let’s clarify what data is. Data is any set of computer-operated quantities, characters, or symbols that can be stored and transmitted in the form of electrical signals and recorded on magnetic, optical, or mechanical recording media.

What is Big Data?

Big data, in a way, means “all data”. They are large datasets that were once not easy to process with traditional methods. The shortest definition of big data is data that is too big for computers to process. In other words, this data is a constantly growing data.

The concept of Big Data is relatively new and represents both the increasing amount and changing types of data currently collected. Big data proponents often refer to this as ‘dating’ the world. As more of the world’s information comes online and digitizes, this means analysts can start using it as data.

Everything You Do Online Is Now Stored And Tracked As Data

Reading a book on your Kindle means what you’re reading, when you’re reading, how fast you’re reading, etc. generates data about Similarly, listening to music generates data about what you listen to, when and in what order. Your smartphone is constantly uploading data about where you are, how fast you move, and what apps you use. Another important thing to keep in mind is that big data is not just about the amount of data we produce, it’s also about all the different types of data (text, video, call logs, sensor logs, customer transactions, etc.).

What is Big Data 7V?

Big Data was initially characterized by three Vs: volume, variety, velocity. These characteristics were first described in 2001 by Doug Laney, who later became an analyst at consulting firm Meta Group Inc. Gartner made them even more popular after purchasing Meta Group in 2005.

- Volume: Volume is the main characteristic of big data, but it is the large amount of data stored. A big data environment need not contain large amounts of data. Most, however, are like this by the nature of the data collected and stored. Clickstreams, logs, and stream processing systems are among the resources that consistently generate large volumes of data. Big Data also encompasses a wide variety of data types. Structured data such as transactions and financial records; unstructured data such as text, documents and multimedia files, and semi-structured data such as web server logs and streaming data from sensors.

- Velocity: Speed refers to the speed at which data must be processed and analyzed after it is produced. In most cases, large data sets are updated in real or near real time, rather than the daily, weekly, or monthly updates done in many traditional data warehouses. Managing data velocity is also important, as Big Data analytics expands into machine learning and artificial intelligence (AI) where analytical processes automatically find patterns in data and use them to generate insights.

- Variety: The different sources and forms in which data is collected, such as numbers, text, video, images, audio, and text. Roughly 95% of all big data is unstructured, meaning it doesn’t fit easily into a simple, traditional model. Everything from emails and videos to scientific and meteorological data can create a huge stream of data, each with its own unique characteristics.

- Veracity: Accuracy refers to the degree of accuracy in datasets and how reliable they are. Raw data collected from various sources can cause data quality issues that can be difficult to detect. If not corrected by data cleansing processes, bad data leads to analytics errors that can reduce the value of business analytics initiatives. Data management and analytics teams also need to make sure they have enough accurate data to produce valid results.

- Variability: The meaning of data is constantly changing. The meaning of words in unstructured data can vary depending on context. For example, language processing by computers is extremely difficult because words often have several meanings. Data scientists must account for this variability by creating complex programs that understand context and meaning.

- Visualization: Data should be understandable by everyone. Once the data is analyzed, it needs to be presented in a visualization for users to understand and act on. Visualization is the visualization of complex data that tells the story of the data scientist, turning data into information and information into stories.

- Value: For data to be useful, it must be combined with rigorous processing and analysis.

No matter how many Vs you prefer in big data, one thing is certain: Big data is here and it’s growing. Every organization needs to understand what big data means to them and how it can help them. The possibilities are truly endless.

Why is Big Data Important?

Companies use big data in their systems to improve their operations, provide better customer service, create personalized marketing campaigns and take other actions that can increase profits. Businesses that use it effectively have a potential competitive advantage over those that don’t, as they can make faster and more informed business decisions.

For example, big data provides valuable information about customers that companies can use to deliver their marketing, advertising and promotions to increase customer engagement and conversion rates. Both historical and real-time data can be analyzed to assess the evolving preferences of consumers or corporate buyers, enabling businesses to become more responsive to customer wants and needs.

Big data is used in virtually every industry to identify patterns and trends, answer questions, learn about customers, and tackle complex problems. Companies and organizations use information for many reasons, including growing their business, understanding customer decisions, enhancing research, making predictions and targeting key audiences for advertising.

How is Big Data Used by Organizations?

finance

The financial and insurance industries use big data and predictive analytics for fraud detection, risk assessments, credit rankings, brokerage services, and blockchain technology, among other uses. Financial institutions are also using big data to improve their cybersecurity efforts and personalize financial decisions for customers.

Health care

Hospitals, researchers and pharmaceutical companies are using Big Data solutions to improve and advance healthcare. Thanks to access to patient population data, existing treatment methods are being developed; More effective research is being done on diseases such as cancer and Alzheimer’s, and new drugs are being developed. Big data is also used by medical researchers to identify disease symptoms and risk factors, and by doctors to help diagnose diseases and medical conditions in patients. In addition, data from electronic health records, social media sites, the web, and other sources provide healthcare organizations and government agencies with up-to-date information on infectious disease threats or outbreaks.

Media and Entertainment

If you’ve ever used Netflix, Hulu, or any other streaming service that offers recommendations, you’ve seen big data at work. Media companies analyze our reading, watching and listening habits to create personalized experiences. Netflix even uses data about graphics, titles, and colors to make decisions about customer preferences.

Agriculture

From agricultural engineering to predicting crop yields with astonishing accuracy, big data and automation are used to rapidly develop the agricultural industry. In this way, many European countries have become better able to use technological developments in agriculture. With the flow of data over the past two decades, scientists and researchers in many countries are turning to using big data to combat hunger and malnutrition. Supporting open and unrestricted access to global nutrition and agricultural data, Global Open Data for Agriculture and Nutrition (GODAN) facilitates researchers in the fight to end hunger around the world.

Fraud Detection and Prevention

Credit card companies face many frauds and Big Data technologies are used to detect and prevent them. Previous credit card companies would track all transactions, and if a suspicious transaction was detected, they would call the buyer to confirm whether the transaction was made. But now buying patterns are being observed and areas affected by fraud are analyzed using big data analytics. This is very helpful in preventing and detecting scams.

Weather forecast

Big Data technologies are used to predict the weather. A large amount of data about the climate is fed and an average is taken to predict the weather. This flood etc. can be useful for predicting natural disasters.

Public sector

Big data is used in many governments and public sectors. Big data, power research, economic promotion, etc. provides many possibilities. Other government uses include emergency response, crime prevention and smart city initiatives.

In the energy industry, big data helps oil and gas companies identify potential drilling locations and monitor pipeline operations; similarly, utilities use it to monitor power grids. Manufacturers and shipping companies rely on big data to manage their supply chains and optimize delivery routes.

Big Data Examples: Databases, Documents, E-Mails…

Big data comes from countless sources. For example, customer databases, documents, emails, medical records, internet clickstream logs, mobile apps and social networks, personalized e-commerce shopping experiences, financial market modeling, compiling trillions of data points to accelerate cancer research, Spotify, Hulu and Netflix media recommendations from streaming services such as; predicting crop yields for farmers, analyzing traffic patterns to reduce congestion in cities, data tools recognizing retail shopping habits and optimum crop placement.

In addition to data from internal systems, big data environments often contain external data about consumers, financial markets, weather and traffic conditions, geographic information, scientific research, and more. Images, videos, and audio files are also big data formats, and many big data applications include streaming data that is continuously processed and collected.

Big Data Terms

Inevitably, much of the confusion around big data comes from the variety of new (for most) terms that have popped up around it. Some of the most popular Big Data terms include:

- Algorithm: Mathematical formula run by software to analyze data

- Amazon Web Services (AWS): A collection of cloud computing services that help businesses perform large-scale computing without the need for on-premises storage or processing power

- Cloud (Cloud-Computing): Running software on remote servers, not locally

- Hadoop: A collection of programs that allow the storage, retrieval and analysis of very large datasets

- Internet of Things: Refers to objects (such as sensors) that collect, analyze and transmit their own data (usually without human input).

- Predictive Analytics: Using analytics to predict trends or future events

- Structured v Unstructured data: Structured data is anything that can be arranged in a table so that it relates to other data in the same table. Unstructured data is everything that cannot be done.

- Web scraping: The process of automating the collection and structuring of data from websites (usually by writing code)

How is Big Data Used?

The diversity of big data complicates it by its nature, causing the need for systems that can handle various structural and semantic differences. Big data requires specialized NoSQL databases that can store data in a way that does not require strict adherence to a particular model. This provides the flexibility needed to consistently analyze seemingly disparate sources of information to gain a holistic view of what is happening, how to act and when to act.

How is Big Data Stored?

Big data is usually stored in a repository. Data warehouses are usually built on relational databases and contain only structured data, while data repositories can support a variety of data types. For example, it can be integrated with other platforms, including a central repository, relational databases, or a data warehouse. Data in Big Data systems can be left raw without processing. It can then be edited and used for certain analytical operations. In other cases it is preprocessed using data mining tools and data preparation software. So it is ready for applications that are run regularly.

How is Big Data Processed?

Big data processing places heavy demands on the computing infrastructure. Organizations can deploy their own cloud-based systems or use Big Data as a managed service from cloud providers. Cloud users can scale as many servers as needed to complete big data analytics projects. The business only pays for the storage and processing time it uses, and cloud instances can be shut down until needed again.

How Big Data Analysis Works

To derive valid and relevant results from big data analytics applications, data scientists and other data analysts must have a detailed understanding of the data available and an idea of what they are looking for in it. This makes data preparation, which includes profiling, cleaning, validation, and transformation of datasets, a crucial first step in the analytical process.

After data is collected and prepared for analysis, various data science and advanced analytics disciplines can be applied to run different applications using tools that enable big data analytics. These disciplines include machine learning and the deep learning branch, predictive modelling, data mining, statistical analysis, flow analytics, text mining, and more.

Different branches of analytics that can be done with Big Data clusters include:

Comparative analysis: Examines customer behavior metrics and real-time customer engagement to compare a company’s products, services, and branding with those of its competitors.

Social media listening: By analyzing what people are saying about a business or product on social media, it can help identify potential issues and target audiences for marketing campaigns.

Marketing tactics: Provides data to use to improve campaigns and customer offerings for marketing products, services, and many business ventures

Sentiment analysis: All the data collected about customers can be analyzed to reveal how they feel about a company or brand, their level of customer satisfaction, potential problems, and how customer service can be improved.

Big Data Management Technologies

Hadoop, an open source distributed processing framework released in 2006, was initially at the heart of most big data architectures. The development of Spark and other rendering engines has left MapReduce, the engine built into Hadoop, in the background. The result is an ecosystem of big data technologies that can be used for different applications but often distributed together. Big data platforms and managed services offered by IT vendors combine many of these technologies into a single package for use primarily in the cloud. Some of them are: Amazon EMR (formerly Elastic MapReduce), Cloudera Data Platform, Google Cloud Dataproc, HPE Ezmeral Data Fabric (formerly MapR Data Platform) and Microsoft Azure HDInsight.

What Are the Big Data Challenges?

In conjunction with compute capacity issues, designing a big data architecture is a huge challenge for users. Big data systems must be tailored to the specific needs of an organization. Deploying and managing Big Data systems also requires new skills compared to those typically possessed by database administrators and developers focusing on relational software. Both of these issues can be mitigated by using a managed cloud service. But IT managers need to keep a close eye on cloud usage to ensure costs don’t spiral out of control. In addition, moving on-premises datasets and processing workloads to the cloud is often a complex process.

Other challenges in managing Big Data systems include making data accessible to data scientists and analysts, especially in distributed environments that contain a mix of different platforms and data stores. To help analysts find relevant data, data management and analytics teams are increasingly creating data catalogs that include metadata management and data origin functions. The process of integrating large datasets becomes complex, especially when data diversity and speed are factors.

Keys to Effective Big Data Strategy

In an organization, developing a big data strategy requires an understanding of business goals and data currently in use, as well as evaluating the need for additional data to help achieve goals. Other steps to be taken include: prioritizing planned use cases and applications; identifying new systems and tools needed; internal skills assessment to create a distribution roadmap and see if retraining or hiring is necessary.

A data governance program and related data quality management processes should also be a priority to ensure clean, consistent and proper use of large data sets. Best practices for managing and analyzing big data include focusing on business needs for information over existing technologies and using data visualization to assist with data discovery and analysis.

Big Data Collection Legal Practices and Regulations

As the collection and use of big data increases, so does the potential for data misuse. Public outcry over data breaches and other privacy breaches led to the European Union’s ratification of the General Data Protection Regulation (GDPR), data privacy law that came into effect in May 2018. GDPR limits the types of data that organizations can collect and requires optional selection (with consent of individuals or in accordance with other specified reasons for collecting personal data). It also includes a right to be forgotten provision, which allows EU residents to ask companies to delete their data.

While the United States does not have similar federal laws, the California Consumer Privacy Act (CCPA) seeks to give California residents greater control over the collection and use of their personal information by companies doing business in the state. The CCPA was signed into law in 2018 and entered into force on 1 January 2020. To ensure they comply with such laws, businesses need to carefully manage their big data collection process. Controls should be in place to identify regulated data and prevent unauthorized workers from accessing it.

Big Data History

Data collection can be traced back to how ancient civilizations used stick counts to track food, but the history of big data really begins much later. Here is a brief timeline of some of the key moments that got us where we are today.

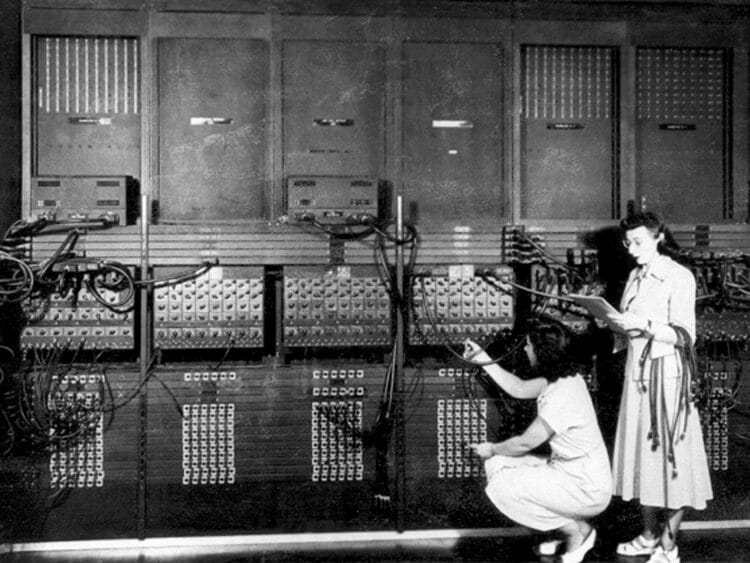

1881: One of the earliest instances of data loading occurred during the 1880 census. The Hollerith tabulation machine was invented, and the job of processing census data was reduced from ten years of labor to less than a year.

1928: German-Austrian engineer Fritz Pfleumer develops on-tape magnetic data storage, paving the way for how digital data will be stored for the next century.

1948: Shannon’s ‘Information Theory’ was developed and this theory laid the foundation for the widely used information infrastructure today.

1970: Edgar F. Codd, a mathematician at IBM, introduced a ‘relational database’ showing how information in Big Data bases can be accessed without knowing its structure or location.

1976: Commercial use of Material Requirements Planning (MRP) systems was developed to organize and plan information and became more common to streamline business operations.

1989: The World Wide Web was created by Tim Berners-Lee.

2001: Doug Laney presented a paper describing the ‘3 Vs of Data’ that has become the key features of big data. In the same year, the term ‘software-as-a-service’ was coined for the first time.

2005: Hadoop, open source software framework for large dataset storage, was created.

2007: The term ‘Big Data’ is widely introduced in Wired’s article ‘End of theory: Data deluge restores scientific method’. (The End of Theory: The Data Deluge Makes the Scientific Method Obsolete)

2008: A team of computer science researchers published the paper ‘Big data computing: Creating revolutionary breakthroughs in commerce, science and society’ describing how big data is fundamentally changing the way companies and organizations do business. (Big Data Computing: Creating Revolutionary Breakthroughs in Commerce, Science and Society,)

2010: Google CEO Eric Schmidt revealed that every two days, humans generate as much information as they created from the beginning of civilization until 2003.

2014: More and more companies are moving their Enterprise Resource Planning Systems to the cloud. The Internet of things has become widely used, with an estimated 3.7 billion connected devices or things in use transmitting massive amounts of data every day.

2016: The Obama administration released the ‘Federal Big Data Research and Strategic Development Plan’, designed to enable the research and development of big data applications that will directly benefit society and the economy. (Federal Big Data Research and Strategic Development Plan)

2017: IBM research says 2.5 quintillion bytes of data are created daily, and 90% of the world’s data was created in the last two years.

Why Big Data Has Become So Popular

The recent popularity of big data is largely due to new developments in technology and infrastructure that allow a lot of data to be processed, stored and analyzed. Computing power has increased significantly over the past five years, while at the same time the price has dropped, making it more accessible to small and medium-sized companies. As technology has become more powerful and cheaper, numerous companies have sprung up creating products and services that help businesses take advantage of all the big data it has to offer.

The Human Aspect of Big Data Management and Analytics

Ultimately, the business value and benefits of big data initiatives depend on the employees tasked with managing and analyzing data. Some big data tools enable fewer technical users to run predictive analytics applications or help businesses set up a suitable infrastructure for big data projects while minimizing the need for hardware and distributed software knowledge. Big data can sometimes be compared to small data, a term used to describe datasets that can be easily used for self-service BI and analytics. Let’s finish our article with one of the most frequently used phrases when it comes to big data: “Big data is for machines; small data is for people.”